Kubernetes, it’s hip, it’s here to stay and comes up in about any conversation. Today I’ve spent some time converting my single-node homelab from docker-compose to a single-node Kubernetes stack. Because I only have a single server in my lab, I will be using minikube. minikube is a tool that lets you run Kubernetes locally on a single-node Kubernetes cluster.

In this post, I’ll take you over the basics to get your network sorted and your first deployment running. I will assume you already have a Linux machine with Docker pre-installed.

The main idea is to get some hands-on experience running Kubernetes with a use-case that most of us will have.

Preparing the container host

To install minikube, make sure that Docker is installed correctly and the daemon is running. You can verify this by invoking docker ps.

Next, let’s install the dependencies. My host runs Alpine Linux, in this post we will be using the latest binaries from Google.

If you’d rather use your standard repo (e.g. apk add kubectl) that could work. Substitute with yum, apt-get, snap depending on the distribution you are running.

kubectl

kubectl is a command line interface for running commands against Kubernetes clusters. The official documentation is located here, but it boils down to running the below commands:

curl -LO "https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl"

chmod +x ./kubectl

mv ./kubectl /usr/local/bin/kubectl

kubectl version --client -o yaml

If all went well, you should see output like:

clientVersion:

buildDate: "2020-12-18T12:09:25Z"

compiler: gc

gitCommit: c4d752765b3bbac2237bf87cf0b1c2e307844666

gitTreeState: clean

gitVersion: v1.20.1

kubectl binary results in an error message like: not found you need to install libc6-compat by running apk add libc6-compat.

minikube

To install minikube we will download the binaries move them to our /usr/local/bin/ directory as follows:

curl -LO https://storage.googleapis.com/minikube/releases/latest/minikube-linux-amd64

sudo install minikube-linux-amd64 /usr/local/bin/minikube

minikube version

If minikube was installed correctly you should now see the version output in your terminal. Next we will start minikube using the docker driver. The docker driver allows you to install Kubernetes into an existing Docker install. On Linux, this does not require virtualization to be enabled. In short this runs Docker-in-Docker.

minikube config set driver docker

minikube start

If you run into are running as root, you need to add the --force flag. This will force minikube to perform possibly dangerous operations. For more info, read this issue on github. rootless is currently not support by the Docker driver. If you don’t use --force, you will be faced with the following error:

❌ Exiting due to DRV_AS_ROOT: The "docker" driver should not be used with root privileges.

So how does this work?

Let’s run a few commands to better understand what is happening.

docker ps, will return all the containers running on the docker host. Notice how there is only a singlek8sDocker container running.

CONTAINER ID IMAGE COMMAND

5209ac2dd2d9 gcr.io/k8s-minikube/kicbase:v0.0.15-snapshot4 "/usr/local/bin/entr…"

kubectl get pods -A, this should return the pods running on our newly created Kubernetes “cluster”.

NAMESPACE NAME READY

kube-system coredns-74ff55c5b-46pcr 1/1

kube-system etcd-minikube 1/1

kube-system kube-apiserver-minikube 1/1

kube-system kube-controller-manager-minikube 1/1

kube-system kube-proxy-4x759 1/1

kube-system kube-scheduler-minikube 1/1

kube-system storage-provisioner 1/1

So why are there multiple pods running, but we only have a single Docker container on our host? That’s because they are nested, minikube runs Docker-in-Docker. We can confirm this by running docker exec minikube docker ps:

IMAGE COMMAND STATUS NAMES

85069258b98a "/storage-provisioner" Up 10 minutes k8s_storage-...

bfe3a36ebd25 "/coredns -conf /etc…" Up 11 minutes k8s_coredns_...

10cc881966cf "/usr/local/bin/kube…" Up 11 minutes k8s_kube-pro...

b9fa1895dcaa "kube-controller-man…" Up 12 minutes k8s_kube-con...

ca9843d3b545 "kube-apiserver --ad…" Up 12 minutes k8s_kube-api...

0369cf4303ff "etcd --advertise-cl…" Up 12 minutes k8s_etcd_etc...

3138b6e3d471 "kube-scheduler --au…" Up 12 minutes k8s_kube-sch...

Networking

Now that we have Docker-in-Docker with multiple bridge networks it becomes slightly harder to understand from a networking aspect.

Let’s go over this our network briefly so it’s easier to understand how everything is connected. The network now looks as below (your CIDR ranges might be different):

Networks:

- Name: Lan

CIDR: 192.168.1.0/24

Devices:

- Host: MacBook

IP: 192.168.1.2

- Host: Container host

IP: 192.168.1.9

Networks:

- Name: bridge

CIDR: 192.168.49.0/24

Containers:

- Name: minikube

IP: 192.168.49.2

Networks:

- Name: k8s services

CIDR: 10.96.0.0/12

Discover your own

To find out how yours look, try running the following commands on your container host:

# Get minikube container IP (All should result in the same IP)

minikube ip

docker inspect minikube | jq -r ".[].NetworkSettings.Networks[].IPAddress"

cat ~/.minikube/profiles/minikube/config.json | jq -r ".Nodes[].IP"

# Get Docker bridge network in use by minikube

docker network ls # Should return a minikube bridge network

docker network inspect minikube | jq -r ".[].IPAM.Config[].Subnet"

# Kubernetes Config (Service network)

cat ~/.minikube/profiles/minikube/config.json | jq -r ".KubernetesConfig"

jq installed. Alternatively you could just use grep e.g. docker network inspect minikube | grep -i subnet

Network graph

flowchart TB

isp(("isp - internet")) <--0.0.0.0/0 via wan0--> router

subgraph lan["lan 192.168.1.0/24"]

router["192.168.1.1 - router"]

host[192.168.1.9 - container host]

mbp["192.168.1.2 - macbook"]

router --route 10.96.0.0/12 via 192.168.1.9--> host

mbp --route 0.0.0.0/0 via 192.168.1.1--> router

end

host --"route 192.168.49.0/24 via br0"--> br0

host --"route 10.96.0.0/12 via 192.168.49.1"-->minikube

subgraph br0["192.168.49.0 - docker br0"]

minikube["192.168.49.2 - minikube container"]

end

subgraph services["10.96.0.0/12 - k8s-services"]

none["None: we will deploy services in pt2."]

end

minikube --k8s ServiceCIDR--> services

Enable routing

To get traffic from your lan routed to minikube and the Kubernetes services that will be running within, we need to enable IP forwarding on our container host, as well as make the next hop known to our router.

On our container host that means we need to set net.ipv4.ip_forward to 1. We also need to make sure our host firewall (iptables) allows forwarding.

Host config

On Alpine we need to update settings in /etc/sysctl.conf, then apply them by running: sysctl -p.

# Update sysctl.conf

echo 'net.ipv4.ip_forward = 1' >> /etc/sysctl.conf

sysctl -p

# Update route table with hop for Kubernetes Services network.

# 1. Get Kubernetes ServiceCIDR

cat ~/.minikube/profiles/minikube/config.json | jq -r ".KubernetesConfig.ServiceCIDR"

# 2. Get minikube IP

minikube ip

# 3. route add <ServiceCIDR> via <Minikube IP>

ip route add 10.96.0.0/12 via 192.168.49.2

# Allow forwarding in iptables

iptables -A FORWARD -i eth0 -j ACCEPT

Router configuration

Finally, we need to instruct our router to forward packets for the minikube bridge network, as well as the Kubernetes Services network to our container host. Remember, we can get those networks as follows:

# 1. Get Minikube bridge network

docker network inspect minikube | jq -r ".[].IPAM.Config[].Subnet"

# 2. Get Kubernetes ServiceCIDR

cat ~/.minikube/profiles/minikube/config.json | jq -r ".KubernetesConfig.ServiceCIDR"

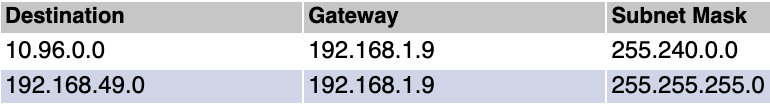

On my router (Tomato) those settings are under Advanced -> Routing -> Static Routing Table.

You should now be able to get traffic to Minikube! Let’s test that from our laptop/desktop.

traceroute to 192.168.49.2 (192.168.49.2), 64 hops max, 52 byte packets

1 192.168.1.1 (192.168.1.1) 2.217 ms 1.861 ms 1.021 ms

2 192.168.1.9 (192.168.1.9) 1.196 ms 2.003 ms 1.582 ms

3 192.168.49.2 (192.168.49.2) 1.283 ms 2.201 ms 1.455 ms

Great, you’ve now completed your minikube setup and network configuration. In the next post, we’ll create and deploy our first Kubernetes Service!